STATUS: DEPLOYED ON AWS EKS

THE CORE PRODUCT

VIDEO HARNESS

An LLM-driven video production runtime where Claude is the director, and tools are the crew. You give it a messy brief. It reconstructs the creative intent, plans shots, routes to the right models, generates frames, evaluates quality, and delivers a finished MP4 — with every decision logged and every dollar gated by human approval.

THE PROBLEM

Video AI models are unstable, expensive, and opaque. Seedance costs $0.35/generation. Kling hallucinates faces. Sora ignores half the prompt. There's no quality guarantee, no cost control, no audit trail. Production teams treat AI video like a slot machine — pull the handle, pray.

THE SOLUTION

VH wraps these models in a contract-first runtime. The LLM understands the creative brief. It plans the approach. It routes to the right model for each shot. And before any money leaves your account — a human approves the plan. Not luck. System.

+ THE FULL PIPELINE — EVERY STEP IS A CONTRACT

STEP 01

SOURCE

Messy brief in. LLM reconstructs creative intent into structured script + asset plan.

STEP 02

DIRECTOR

Claude plans shots, selects models per scene, generates prompts optimized for each provider.

STEP 03

ARTIST ×3

Seedream renders first-frames. Seedance generates video. Parallel execution across providers.

STEP 04

QA → APPROVE → MP4

Quality evaluation with counterfactual baselines. Human approves spend. Output: production-ready MP4.

EVERY STEP WRITES TO A PROVENANCE LEDGER // EVERY SPEND IS GATED // EVERY DECISION IS TRACEABLE

PROOF CONTRACT

Every render, publish, and spend is locked until human approval. The provenance ledger records why each decision was made. Inspectable, not asserted.

BOUNDED AUTONOMY

Goal locked, path autonomous. The LLM chooses creative direction, shot composition, model routing. Humans only touch the spend button. A production system, not a chatbot.

MULTI-MODEL ROUTING

Claude Sonnet (text/direction) + Seedream (first-frames) + Seedance 2.0 (video). Routes differently for commerce ads vs. music videos vs. risky content.

SOURCE RECONSTRUCTION

Weak input → strong internal brief. A messy "make me a product video" becomes a structured creative brief + script strategy + asset plan before any render starts.

COUNTERFACTUAL QA

Quality isn't "does it look good?" It's "is this better than the baseline?" Rubric evidence + comparison deltas. Quality advantage is measured, not vibed.

DETERMINISTIC PREVIEW

Provider-independent storyboard artifacts. See the decision path before video renders. Founders and investors inspect the plan — not just the output.

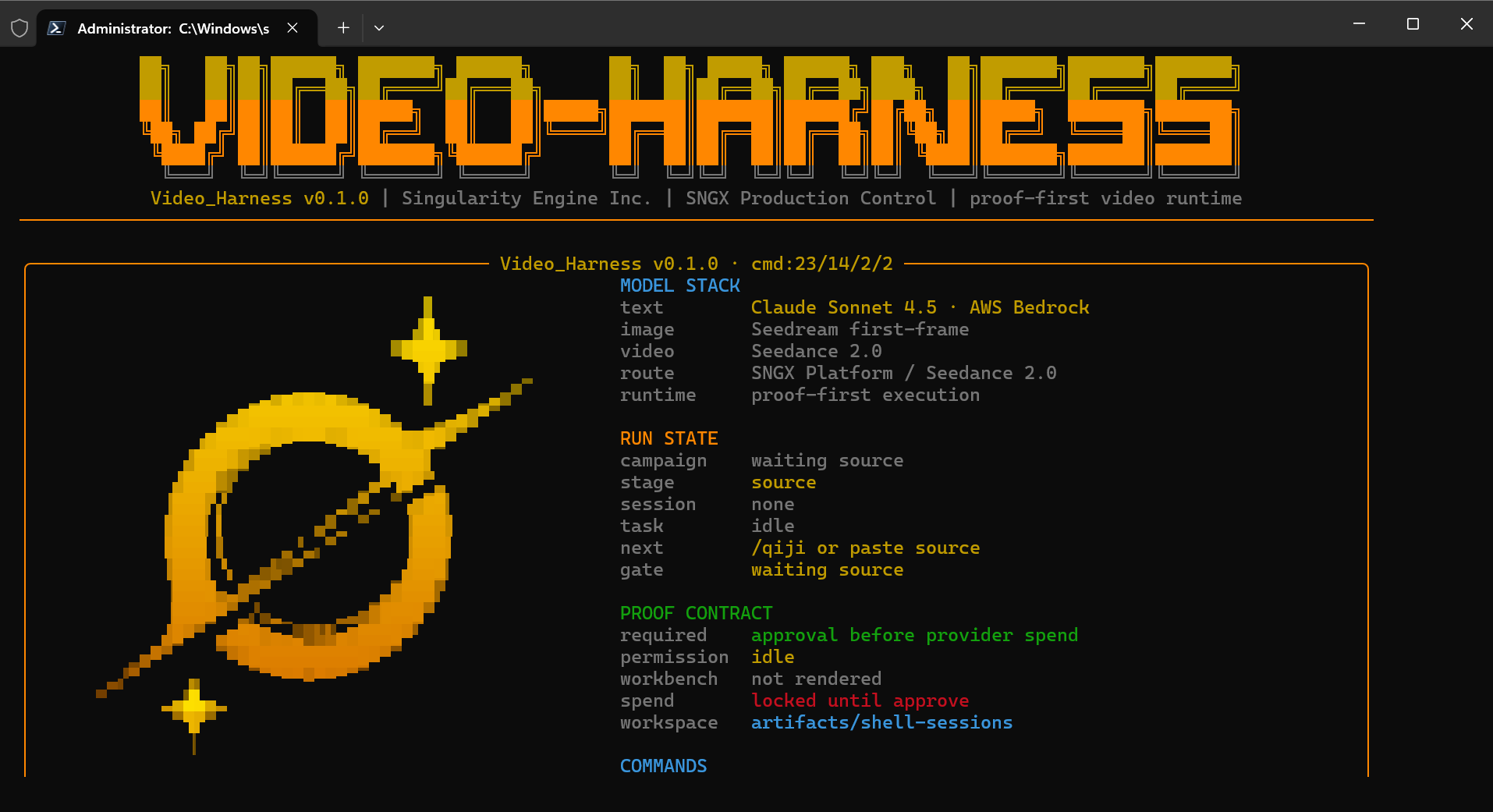

VIDEO HARNESS v0.1.0 // CLI DASHBOARD

CLAUDE SONNET 4.5 · AWS BEDROCK · SEEDANCE 2.0

MODEL STACK + RUN STATE + PROOF CONTRACT // PROOF-FIRST EXECUTION RUNTIME

+ WHY NOT JUST USE RUNWAY?

Runway / Pika / Kling Studio are consumer tools — you type a prompt, get a video, hope it's good. No structured direction, no cost control, no quality evaluation, no audit trail. Great for one-offs. Unusable for production.

Video Harness is infrastructure. It sits between your creative intent and the model providers. It plans, routes, evaluates, and gates. The output is not just a video — it's a video with a provenance ledger proving why every frame exists.

AWS EKS · BEDROCK · POSTGRESQL · TYPESCRIPT · DEPLOYED TO PRODUCTION · REAL MP4 OUTPUT VERIFIED